By Abhishek Patel · April 25, 2026

A healthcare data management platform is the difference between “we have data everywhere” and “we can actually trust what we see.” If you’re a payer, provider, ACO, or life sciences team trying to unify EHR, claims, labs, and patient engagement data, you already know the pain: duplicates, missing context, slow pipelines, and dashboards nobody believes.

And here’s the kicker. Most organizations don’t fail because they lack data. They fail because they can’t operate data—securely, repeatably, with governance that doesn’t turn into a six-month committee meeting.

So I’m going to walk you through what an HDMP really is, what capabilities matter, how to think about architecture and governance, and how to pick a platform without getting trapped in vendor promises. We’ll also cover what competitors often skip: AI readiness, data observability, and a practical standardization playbook you can actually run.

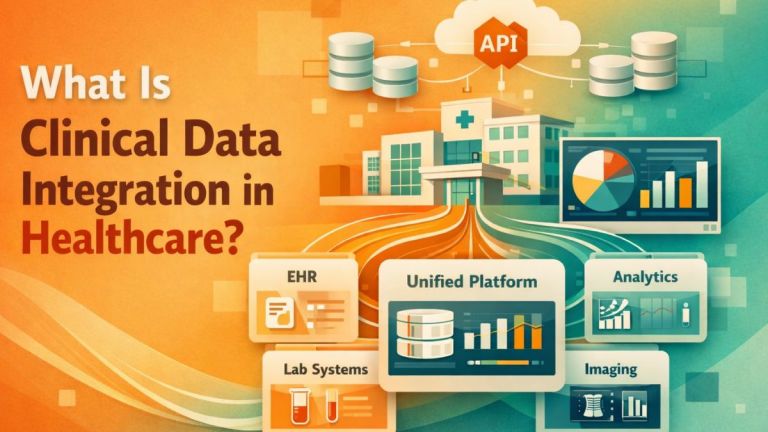

What Is a Healthcare Data Management Platform

A healthcare data management platform, or HDMP, is the system of record for how healthcare data is ingested, standardized, linked, secured, governed, and served to downstream users and applications. It’s not just storage. It’s the operating layer that turns raw feeds into trusted, auditable, reusable data products.

Now, you’ll see vendors call all kinds of things “platforms.” Some are analytics suites. Some are integration tools. Some are data lakes with a healthcare skin. A real HDMP sits across the lifecycle: from ingestion to identity resolution to governance to delivery.

HDMP vs data warehouse vs data lake vs analytics platform

Data warehouse is usually curated, structured, and optimized for reporting. Great for quality measures and finance. But it can be rigid, slow to change, and painful for semi-structured clinical data.

Data lake is a landing zone for raw and semi-structured data. Great for scale and flexibility. But lakes get swampy fast when you don’t enforce metadata, quality checks, and access controls.

Analytics platform is what users touch: dashboards, semantic layers, notebooks, measure engines. It’s valuable, but it’s downstream. If the data is wrong, the charts just make you wrong faster.

An HDMP usually includes parts of all three, plus healthcare-specific plumbing: FHIR and HL7 ingestion, terminology normalization, patient matching, consent-aware access, and PHI-safe workflows. Think of it as the enterprise control plane for healthcare data.

Who uses it

Payers use HDMPs for claims aggregation, risk adjustment, utilization management, network analytics, and fraud, waste, and abuse detection. They care about scale, latency, and repeatable measure logic.

Providers use them to unify EHR, scheduling, labs, imaging, and patient engagement data for care gaps, throughput, clinical operations, and quality reporting. They care about clinical context and trust.

ACOs live and die by attribution, measure accuracy, and near-real-time care gap closure. Identity resolution isn’t “nice to have” for them. It’s oxygen.

Life sciences teams use HDMPs for RWE, trial feasibility, data linking, and longitudinal patient journeys. They care about provenance, de-identification, and reproducibility (and yes, audit readiness).

Also Read: Data Integration ROI: Platform vs In‑House Build

Core Capabilities to Expect

If you’re shopping, don’t start with the UI. Start with the boring stuff. The boring stuff is where projects succeed or quietly die.

Data ingestion and aggregation

A good HDMP ingests from the messy reality of healthcare:

- EHR data via FHIR APIs, HL7 v2 feeds, CCD and CDA documents, and flat exports when that’s all you can get.

- Claims via X12 837 and 835, plus eligibility and authorizations.

- Labs from HL7 v2 ORU messages and reference lab portals.

- Imaging metadata and clinical context via DICOM and radiology systems.

- SDoH from screening tools, community partners, and public datasets (often the least standardized data you’ll ever touch).

But don’t just ask “can you ingest it?” Ask: how do you monitor it, how do you replay it, and how do you prove completeness? A broken ADT feed can quietly wreck attribution for weeks.

Data standardization strategy

This is where winners separate from checkbox vendors. A real healthcare data standardization strategy blends structure standards and clinical terminologies:

- FHIR and HL7 for structure and exchange

- ICD-10 for diagnoses

- SNOMED CT for clinical concepts

- LOINC for labs and observations

So what does “standardization” mean in practice? It means mapping, versioning, and testing. It means handling local codes. It means knowing that “HbA1c” could arrive as 20 different LOINC-like strings depending on the lab and the interface engine.

And yes, you need a terminology service or terminology layer. If the platform doesn’t have one, you’ll build it anyway (usually in a panic, right before a reporting deadline).

Master data management and identity resolution

Unified patient record is the headline. Identity resolution is the hard part.

You want patient matching that supports deterministic and probabilistic logic, survivorship rules, householding when relevant, and transparency into why records were linked. If a clinician challenges a match, you need to explain it. “The model said so” won’t fly.

Provider identity matters too. If you can’t accurately resolve NPIs, tax IDs, locations, and affiliations over time, network analytics and referral patterns turn into fiction.

Real-world scenario: I’ve seen a health system with a 12–18% duplicate patient rate in downstream analytics because registration workflows varied by facility. That one issue inflated readmission counts and broke care management lists. Fixing it wasn’t glamorous, but it paid off fast.

Data quality, lineage, observability

Most competitor content talks about “data quality.” Few talk about data observability like it’s production software. But that’s exactly what it is.

You want:

- Automated checks for freshness, completeness, schema drift, and outliers

- Lineage from source to curated layer to measure output

- SLAs for critical datasets like ADT, claims, and problem lists

- Incident response workflows: alerting, triage, root cause, and postmortems

So ask a blunt question: when an HL7 segment changes and a field shifts, how quickly will you know? Minutes? Days? Or when a VP complains in a meeting?

And track KPIs. I like a tight set: data freshness SLA compliance, duplicate identity rate, measure drift, time-to-insight, and cost-to-operate per domain. If you can’t measure reliability, you can’t improve it.

Metadata catalog and search

If people can’t find data, they’ll re-create it. Then you’ll have five “gold” tables and none of them match.

A strong catalog includes business definitions, technical metadata, lineage, data owners, sensitivity tags, and searchable documentation. And it should be integrated with access workflows, not a dusty wiki nobody trusts.

Interoperability and Integration

Interoperability isn’t a module. It’s the whole job. Your HDMP should make it easier to connect, exchange, and evolve without breaking everything every quarter.

FHIR APIs, HL7 v2, X12, DICOM, CDA

Here’s what “must-have” looks like in the real world:

- FHIR APIs for modern EHR access, plus bulk export support where available

- HL7 v2 for ADT, orders, results, and the feeds you’ll still be running in 2030

- X12 for claims, remits, eligibility, and authorizations

- DICOM for imaging workflows and metadata, sometimes pixel data depending on use case

- CDA and CCD documents because they still show up everywhere

But don’t ignore the “unofficial” sources: CSV extracts, SFTP drops, and third-party portals. They’re messy. They’re common. And they’re often mission-critical.

Integration patterns

You’ll see three main patterns:

- ETL when you need controlled transformations before loading curated tables

- ELT when you land raw data fast and transform in the platform for flexibility

- Streaming when latency matters, like ADT-triggered care coordination or near-real-time bed management

And then there’s iPaaS. It can be great for connector speed and operational management. But be careful: iPaaS can become a second platform with its own logic, its own costs, and its own lock-in. If your healthcare data integration strategy is “buy connectors and hope,” you’ll pay for it later.

Healthcare data integration strategy checklist

- Source inventory with owners, refresh rates, and data contracts

- Latency tiers such as real-time, hourly, daily, monthly

- Replay and backfill plan for every pipeline

- Schema change handling and versioning approach

- Testing for mappings, terminologies, and measure logic

- Operational runbooks for failures and escalations

It’s not fancy. It’s how you avoid 2 a.m. surprises.

Security, Privacy, and Compliance

PHI is unforgiving. One misconfigured bucket or over-permissioned BI workspace and you’ve got an incident, not a project.

HIPAA, HITECH, GDPR, SOC 2

In the US, you’re dealing with HIPAA and HITECH, plus state privacy laws depending on where you operate. If you support EU data subjects, GDPR enters the chat fast. On the assurance side, SOC 2 reports matter, especially when you’re evaluating vendors.

But compliance isn’t the goal. It’s the floor. The real question is: can you prove who accessed what, when, and why?

Role-based access, encryption, key management

Look for:

- Least privilege access models with RBAC and ideally ABAC for finer controls

- Encryption in transit and at rest everywhere, no exceptions

- Key management that supports customer-managed keys when required

- Environment separation between dev, test, and prod with real controls, not vibes

And watch for “admin” roles that quietly grant broad access. I’ve seen teams accidentally give analysts access to raw HL7 messages because it was easier than building a governed curated layer. It was also a compliance nightmare.

De-identification, consent, audit trails

De-identification isn’t a one-time mask. It’s policy plus process plus enforcement. You want configurable de-identification that supports different risk levels: Safe Harbor style removal, expert determination approaches, tokenization, and pseudonymization for linking.

Consent matters more every year. If you can’t enforce consent flags across downstream extracts and AI workloads, you’re taking on risk you probably don’t understand.

And yes, audit trails should be first-class: access logs, query logs, data exports, and administrative actions. If your platform can’t make audits easier, it’s not doing its job.

Governance and Operating Model

Governance is where good intentions go to die. Unless you keep it practical.

Healthcare data governance strategy

A workable healthcare data governance strategy has clear roles:

- Data owners who make decisions and accept risk

- Data stewards who manage definitions, quality rules, and issue triage

- Platform team who builds pipelines, access controls, and reliability practices

- Consumers who agree to use certified datasets and stop building shadow logic

But here’s my opinion: governance should ship value every sprint. If your governance program can’t produce a certified “diabetes registry” dataset in 30–45 days, it’s too theoretical.

So start with a few policies that matter: naming standards, data classification, access request workflows, and how measure definitions get approved and versioned. Keep it tight. Keep it enforced.

Data retention, records management, eDiscovery

Retention is not “keep everything forever.” That gets expensive and risky. Define retention by domain and purpose: claims, clinical, imaging, communications, audit logs. Align with legal and regulatory requirements, and build deletion workflows that are provable.

And don’t forget eDiscovery. When legal asks for records tied to a patient, provider, or episode across systems, your platform should reduce the scramble, not add to it.

Architecture for Enterprise Healthcare Data Infrastructure

If you’re building enterprise healthcare data infrastructure, architecture choices show up in your cloud bill, your security posture, and your ability to scale beyond the first use case.

Cloud vs on-prem vs hybrid and multi-tenant considerations

Cloud is usually the default now because elasticity matters and managed services reduce operational burden. But cloud doesn’t magically fix governance or identity resolution. It just gives you more places to make mistakes.

On-prem still shows up for specific constraints: legacy systems, contractual restrictions, or organizations with deep internal infrastructure teams. It can work, but upgrades and scaling are slower.

Hybrid is common in healthcare because reality is messy. You might stream ADT from on-prem interface engines while hosting the curated layers in the cloud.

Multi-tenant matters if you’re an ACO, a payer with multiple lines of business, or a vendor supporting multiple clients. You need strong tenant isolation, separate keys, and clear data boundary enforcement. “Logical separation” is not enough unless it’s backed by controls and audits.

Reference architecture

I like a simple reference model that teams can explain on a whiteboard:

- Landing zone for raw ingestion with immutable storage and replay capability

- Standardization layer for structure normalization, terminology mapping, and identity linking

- Curated layers for domain data products like encounters, meds, labs, claims, quality measures

- Serving layer for BI, APIs, extracts, and ML features with governed access

Now, here’s the trick: your curated layers should be treated like products. Owners. SLAs. Versioning. Deprecation. If you treat them like a one-off project artifact, they’ll rot.

AI-Ready Healthcare Data

Everyone wants AI. Few are ready for it. And no, buying an LLM subscription doesn’t make your data usable.

AI-ready healthcare data is trustworthy, well-labeled, well-governed, and traceable back to source. It’s also safe to access, which is where most pilots get stuck.

AI-ready healthcare data requirements

If you want real predictive models or clinical AI, you need:

- Feature consistency across time and populations

- Labels with clear definitions, time windows, and leakage controls

- Ground truth you can defend, not “whatever the billing code says”

- Provenance so you can trace model inputs back to raw sources

And you need bias checks. Healthcare data reflects access, coding practices, and socioeconomic patterns. If you don’t measure bias, you’re just guessing with math.

Healthcare data management for AI

Healthcare data management for AI goes beyond tables. A lot of value is trapped in documents: notes, discharge summaries, pathology reports, referral letters.

If you’re doing RAG, you’ll want a controlled pipeline for document ingestion, chunking, embeddings, and retrieval. But you also need PHI-safe model access with guardrails: redaction where appropriate, role-based retrieval, and logging of prompts and outputs.

Ask vendors how they handle:

- Embedding governance and whether embeddings are treated as sensitive derivatives

- Prompt logging and retention policies

- Evaluation with clinical reviewers and measurable error rates

- Hallucination controls such as citation requirements and retrieval constraints

Real-world example: a care management assistant that summarizes recent events can save nurses 10–15 minutes per patient review. But if it pulls the wrong patient’s note because identity resolution is weak, you’ve created a safety issue, not a productivity win.

Healthcare data strategy for AI adoption

A healthcare data strategy for AI adoption needs people, process, and tech aligned.

People: you need data stewards, clinical SMEs, and security partners involved early. Not at the end when it’s time to approve access.

Process: define model intake, approval, monitoring, and rollback. Models drift. Measures drift too. If you don’t monitor, you won’t notice until outcomes change.

Tech: secure sandboxes, governed feature stores or feature layers, and a clear path from pilot to production. Most teams can prototype in 2–4 weeks. Production is where it gets real.

Use Cases and Outcomes

Use cases are where you prove value. Not with slides. With numbers.

Population health, risk stratification, quality measures

This is the classic HDMP win: unify clinical and claims, resolve identities, and compute measures consistently. You can run HEDIS-like measures, close care gaps, and prioritize outreach with risk stratification.

But watch for measure drift. If your numerator logic changes because a code set updated and nobody versioned it, you’ll “improve” performance on paper while reality stays flat. That’s a bad day.

Revenue cycle, utilization management, fraud, waste, abuse

Providers want fewer denials and faster cash. Payers want better authorization decisions and fewer inappropriate claims. A platform that connects clinical documentation, orders, and claims data can highlight missing documentation, coding issues, and utilization outliers.

And for fraud, waste, and abuse, the platform matters because you need scale and traceability. When investigators ask “why did the model flag this,” you need lineage and evidence, not a black box.

Clinical operations, care gaps, patient engagement

Operational use cases are often the fastest to feel. ED throughput. Bed management. No-show prediction. Referral leakage. Care gap alerts pushed into workflows.

But here’s the honest truth: if the platform can’t deliver timely data, operational teams won’t adopt it. A daily batch for bed management is basically a history lesson.

How to Choose the Right Platform

Choosing an HDMP is a long-term decision. You’re not buying software. You’re buying constraints you’ll live with for 3–7 years.

Requirements matrix

I like to build a requirements matrix across a few dimensions:

- Data sources you must support in year one: EHR, claims, labs, imaging, SDoH

- Latency needs: real-time ADT, daily claims, monthly eligibility, etc.

- Scale: number of members, patients, encounters, documents, and expected growth

- Governance: catalog, lineage, access workflows, stewardship model

- Security: consent enforcement, de-identification, auditability

- Delivery: BI, APIs, extracts, ML features, and operational apps

Then score vendors against it with evidence. Demos are nice. Reference calls are better. A paid pilot with success criteria is best.

Vendor evaluation criteria

Here’s what I’d actually evaluate:

- Time-to-value: can you produce one trusted domain dataset in 30–60 days?

- Interoperability depth: not just “supports FHIR,” but which resources, bulk export, terminology handling, and HL7 quirks

- Total cost to operate: platform fees, compute, storage, integration costs, and people required to run it

- Lock-in risk: proprietary data models, closed transformation logic, difficult export paths

- Observability maturity: monitoring, SLAs, and incident workflows baked in

And ask about failure modes. What happens when a source feed breaks? What happens when you need to reprocess 90 days of data? If the answer is hand-wavy, keep shopping.

Build vs buy vs extend

Build makes sense when you have strong engineering talent, clear standards, and unique needs. But you’ll own everything: connectors, mapping, governance workflows, and security patterns. That’s a real commitment.

Buy makes sense when speed matters and the vendor truly supports your sources and compliance needs. But you need exit plans and data portability.

Extend is common: you might have a cloud data platform already and add healthcare-specific components on top, or use low-code tools for workflows. Power Platform-style approaches can work for intake, stewardship tasks, and lightweight apps, but don’t confuse workflow apps with the core data backbone.

So what’s my bias? If you’re early, buy or extend to get momentum. If you’re mature and have scale, you’ll likely end up with a hybrid of vendor capabilities plus internal engineering.

Also Read: How EMR Integration Improves Clinical Workflows and Reduces Errors

Implementation Roadmap

Implementation is where optimism meets reality. A roadmap keeps you honest.

30 60 90 day plan

First 30 days: lock the scope. Pick 1–2 sources, define success metrics, stand up environments, and build the landing zone with logging and access controls. Don’t boil the ocean.

Next 60 days: implement identity resolution for the pilot domain, build standardization mappings, and publish the first curated dataset with a catalog entry, owner, and SLA. This is where trust is earned.

By 90 days: operationalize. Add observability checks, runbooks, and a repeatable pipeline template. Deliver a real use case: a care gap list, a quality measure, or a utilization dashboard that stakeholders will actually use.

Now, a reality check: enterprise rollouts typically take 6–12 months to cover multiple domains well. But you should show tangible value in the first 90 days. If you can’t, something’s off.

Change management and adoption

Adoption isn’t training. It’s behavior change.

Make it easy to do the right thing: certified datasets, simple access requests, clear definitions, and a visible support channel. And retire the old stuff. If five teams can still pull from five different extracts, they will.

Also, celebrate the unsexy wins. Reducing duplicate identities from 15% to 5% isn’t flashy, but it can improve measure accuracy and care management targeting overnight.

FAQs

What data should be centralized first

Start with the data that drives the highest-value decisions with the least integration friction. For many organizations, that’s ADT plus a core slice of claims or a focused set of FHIR resources like Patient, Encounter, Condition, MedicationRequest, Observation.

If you’re provider-led, ADT and encounters often unlock operational wins fast. If you’re payer-led, claims and eligibility often come first. Either way, pick one domain and make it trustworthy before expanding.

How long does implementation take

A pilot can take 6–12 weeks if you keep scope tight and have source access. A broader enterprise rollout usually takes 6–12 months, sometimes longer if identity resolution, contracting, or security approvals drag.

The biggest variable isn’t the tech. It’s access to source systems, data quality, and decision speed.

What’s the minimum governance needed

Minimum viable governance is simple:

- Named owners for each curated dataset

- Data classification and access request workflow

- Definitions for key fields and measures in a catalog

- Quality checks with alerting for freshness and completeness

- Audit logs for access and exports

Anything less and you’ll end up with “fast data” that nobody trusts. And that’s just expensive noise.

A healthcare data management platform isn’t about storing more data. It’s about making data usable: interoperable, standardized, linked, governed, observable, and secure enough for PHI and ambitious enough for AI.

So focus on the fundamentals: ingestion that’s monitored, a practical healthcare data standardization strategy with terminologies, identity resolution you can explain, and governance that ships real datasets with owners and SLAs. Then push further: data observability, reliability practices, and an AI readiness blueprint that includes PHI-safe access and evaluation.

If you choose well and implement with discipline, you’ll get more than dashboards. You’ll get faster decisions, higher measure accuracy, lower cost-to-operate, and a data foundation that can actually support modern care and analytics. That’s the point. Everything else is just software.